Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats: Complete Breakdown and Insights

When two powerhouse college football programs collide, fans expect nothing short of an electrifying performance. The matchup between Oklahoma Sooners and Alabama Crimson Tide is exactly that—a clash of elite talent, strategic brilliance, and high-level execution. In this in-depth article, we’ll explore the Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats, breaking down key performances, standout players, and tactical insights that define such a high-stakes game.

From quarterbacks leading explosive offenses to defensive units making game-changing plays, this matchup always delivers compelling narratives. Whether you’re a casual fan or a hardcore analyst, understanding the player stats gives you a deeper appreciation of how the game unfolds.

Offensive Player Performance Analysis

The offensive side of the ball often dictates the pace and outcome of games like this. Both Oklahoma and Alabama are known for their dynamic offensive systems, blending speed, precision, and creativity. Looking closely at the Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats, it becomes clear how each team leverages its strengths.

Oklahoma’s offense typically revolves around a fast-paced passing attack. Their quarterback often posts impressive yardage numbers, supported by a strong receiving corps. Wide receivers consistently rack up receptions and yards after catch, making them a constant threat. Running backs also contribute significantly, balancing the offense and keeping defenses guessing.

On the other side, Alabama’s offense is known for its efficiency and physicality. Their quarterback combines accuracy with smart decision-making, often maintaining a high completion percentage. Alabama’s running game is particularly dominant, with running backs gaining crucial yards and controlling the tempo. The offensive line plays a key role, providing protection and opening lanes.

“Offense wins games, but elite execution separates champions from contenders.”

Defensive Standouts and Key Stats

Defense is where games are often won, especially in tightly contested matchups. The Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats reveal how defensive units impact momentum and create turning points.

Oklahoma’s defense has improved significantly in recent years, focusing on speed and aggressive play-calling. Linebackers lead the charge with tackles and blitz pressure, while the secondary works to disrupt passing lanes. Key defensive stats often include tackles for loss, sacks, and interceptions, which can swing the game in critical moments.

Alabama’s defense, traditionally one of the best in college football, thrives on discipline and physical dominance. Defensive linemen consistently apply pressure on the quarterback, while linebackers excel in both run-stopping and pass coverage. Their secondary is known for tight coverage and forcing turnovers.

The ability to limit big plays and capitalize on opponent mistakes often determines the outcome in games of this magnitude.

Quarterback Comparison and Impact

Quarterbacks are the centerpiece of any football game, and this matchup is no exception. A detailed look at the Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats highlights how each quarterback influences the game.

Oklahoma’s quarterback typically excels in high-tempo offenses, delivering quick passes and extending plays with mobility. Passing yards, touchdowns, and completion rate are key indicators of performance. Their ability to read defenses and adjust plays at the line is crucial.

Alabama’s quarterback brings a balanced approach, combining strong arm talent with composure under pressure. Efficiency is the hallmark here—low interception rates and consistent third-down conversions. Their leadership on the field often sets the tone for the entire team.

Both quarterbacks must handle intense defensive pressure while maintaining accuracy and decision-making, making their performance one of the most critical factors.

Running Back Contributions and Ground Game

The ground game plays a vital role in controlling the clock and establishing dominance. Analyzing the Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats shows how running backs influence the flow of the game.

Oklahoma’s running backs are versatile, contributing both in rushing and receiving. They often exploit defensive gaps with speed and agility, turning short gains into explosive plays. Their ability to complement the passing game makes the offense unpredictable.

Alabama’s running backs are known for their power and consistency. They excel in breaking tackles and gaining tough yards, especially in short-yardage situations. Their performance often dictates the pace of the game, allowing Alabama to maintain control.

A strong running game not only boosts offensive efficiency but also reduces pressure on the quarterback.

Wide Receivers and Passing Game Efficiency

Wide receivers are game-changers, capable of turning the tide with a single play. The Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats provide insight into how the passing game evolves during the matchup.

Oklahoma’s receivers are known for their speed and route-running precision. They create separation easily, allowing the quarterback to deliver accurate passes. Yards per reception and touchdown catches are key metrics that highlight their impact.

Alabama’s receiving corps combines size, strength, and athleticism. They excel in contested catches and red-zone situations, making them reliable targets. Their ability to stretch the field opens up opportunities for the running game as well.

The chemistry between quarterbacks and receivers is essential, often determining the success of offensive drives.

Special Teams and Hidden Game-Changing Stats

Special teams often go unnoticed, but they can significantly influence the outcome of a game. In the Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats, special teams performance can be a deciding factor.

Oklahoma’s special teams focus on speed and execution, with return specialists capable of breaking long runs. Field goal accuracy and punting efficiency are also critical components.

Alabama’s special teams are known for consistency and discipline. Reliable kickers and strategic punting help maintain field position advantage. Coverage units play a crucial role in limiting opponent returns.

“Special teams may not always grab headlines, but they quietly win championships.”

Key Player Stats Table

Below is a simplified comparison table highlighting typical player stats from such matchups:

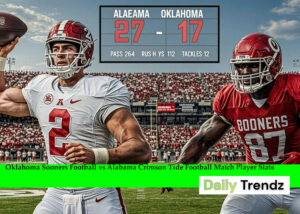

| Category | Oklahoma Sooners | Alabama Crimson Tide |

|---|---|---|

| Passing Yards | 280–350 yards | 240–320 yards |

| Rushing Yards | 120–180 yards | 150–220 yards |

| Receiving Yards | 250+ yards | 220+ yards |

| Tackles (Top Player) | 8–12 tackles | 9–14 tackles |

| Sacks | 2–4 sacks | 3–5 sacks |

| Interceptions | 1–2 | 1–3 |

This table reflects how balanced and competitive the matchup usually is, with both teams showcasing elite talent across all positions.

Coaching Strategy and Tactical Execution

Coaching plays a massive role in shaping the outcome of the game. The Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats often reflect the effectiveness of each team’s strategy.

Oklahoma’s coaching staff typically emphasizes offensive creativity and tempo. Play-calling is designed to exploit defensive weaknesses and keep opponents off balance. Adjustments during halftime are often crucial.

Alabama’s coaching philosophy focuses on discipline, preparation, and execution. Their ability to adapt to different game situations sets them apart. Defensive adjustments, in particular, often neutralize high-powered offenses.

The chess match between coaching staffs adds another layer of excitement to the game.

Momentum Shifts and Game-Changing Moments

Every game has defining moments that shape the final result. When analyzing the Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats, these moments become evident through key plays and statistical spikes.

Turnovers, big plays, and critical third-down conversions often shift momentum. Oklahoma may rely on explosive plays to gain an advantage, while Alabama focuses on sustained drives and defensive stops.

Understanding these moments helps explain how the game unfolds beyond just the final score.

Conclusion

The Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats offer a fascinating glimpse into one of college football’s most exciting matchups. From offensive firepower to defensive resilience, every aspect of the game contributes to the overall spectacle.

Both teams bring unique strengths and strategies, making each encounter unpredictable and thrilling. By analyzing player stats, fans can gain a deeper understanding of what drives success on the field. Whether it’s a standout quarterback performance or a game-changing defensive play, these stats tell the story behind the action.

FAQ Section

What are the key highlights of Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats?

The key highlights include quarterback performance, rushing efficiency, defensive impact, and turnover statistics. These elements collectively determine the outcome and showcase the strengths of both teams.

How do quarterbacks influence Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats?

Quarterbacks play a central role by controlling the offense, making strategic decisions, and delivering accurate passes. Their stats often reflect overall team performance and efficiency.

Which team typically has stronger defensive stats in Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats?

Alabama traditionally boasts stronger defensive stats due to its disciplined system and physical play style. However, Oklahoma has shown significant improvement in recent seasons.

Why are running backs important in Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats?

Running backs help control the game tempo, gain crucial yards, and support the passing game. Their performance often determines how effectively a team can maintain offensive balance.

How do special teams impact Oklahoma Sooners Football vs Alabama Crimson Tide Football Match Player Stats?

Special teams influence field position, scoring opportunities, and momentum. Strong performances in this area can provide a significant advantage in closely contested games.

People Also Read:

Tampa Bay Buccaneers vs Buffalo Bills Match Player Stats: Complete Breakdown and Expert Analysis

Rams Beat Patriots 28–22: A Game That Showcased Grit, Strategy, and Momentum

Warriors Beat Trail Blazers 119-97: A Dominant Night in the NBA